Executive Summary

We asked six agentic tools to plan a project: monday.com, Notion, Copilot, ChatGPT, ClickUp Certified Agents, and ClickUp Super Agents. Here's what we found:

➡️ ClickUp Certified Agents were the only agents that consistently hit "plan ready to run" across all six project criteria: ClickUp's Certified Agent scored 96 out of 100 in a direct benchmark of execution‑ready project plans. It read the brief from the source, created rich project structures in ClickUp, wired dependencies, and documented baselines in ways leaders can use.

➡️ ClickUp Super Agents performed well as a strong baseline build: They detected context automatically and created usable plans inside ClickUp, with solid baselines and clear communication. The Super Agent scored 77.

➡️ Copilot and ChatGPT could get into the project details, but only after meaningful integration work: Without careful wiring, they produced good narratives but thin plans inside the work tools. They scored in the 50-60 range.

➡️ Notion and Monday agents struggled with the core objective: They could draft tasks and lists,but left much of the stitching and cleanup to human teams. They scored in the 40-50 range.

Because ClickUp Certified Agents are built, rigorously tested, and maintained by ClickUp AI experts, they produced end-to-end solutions that were deeply integrated into ClickUp workflows and optimized for performance.

Jay HackHead of Artificial Intelligence at ClickUp

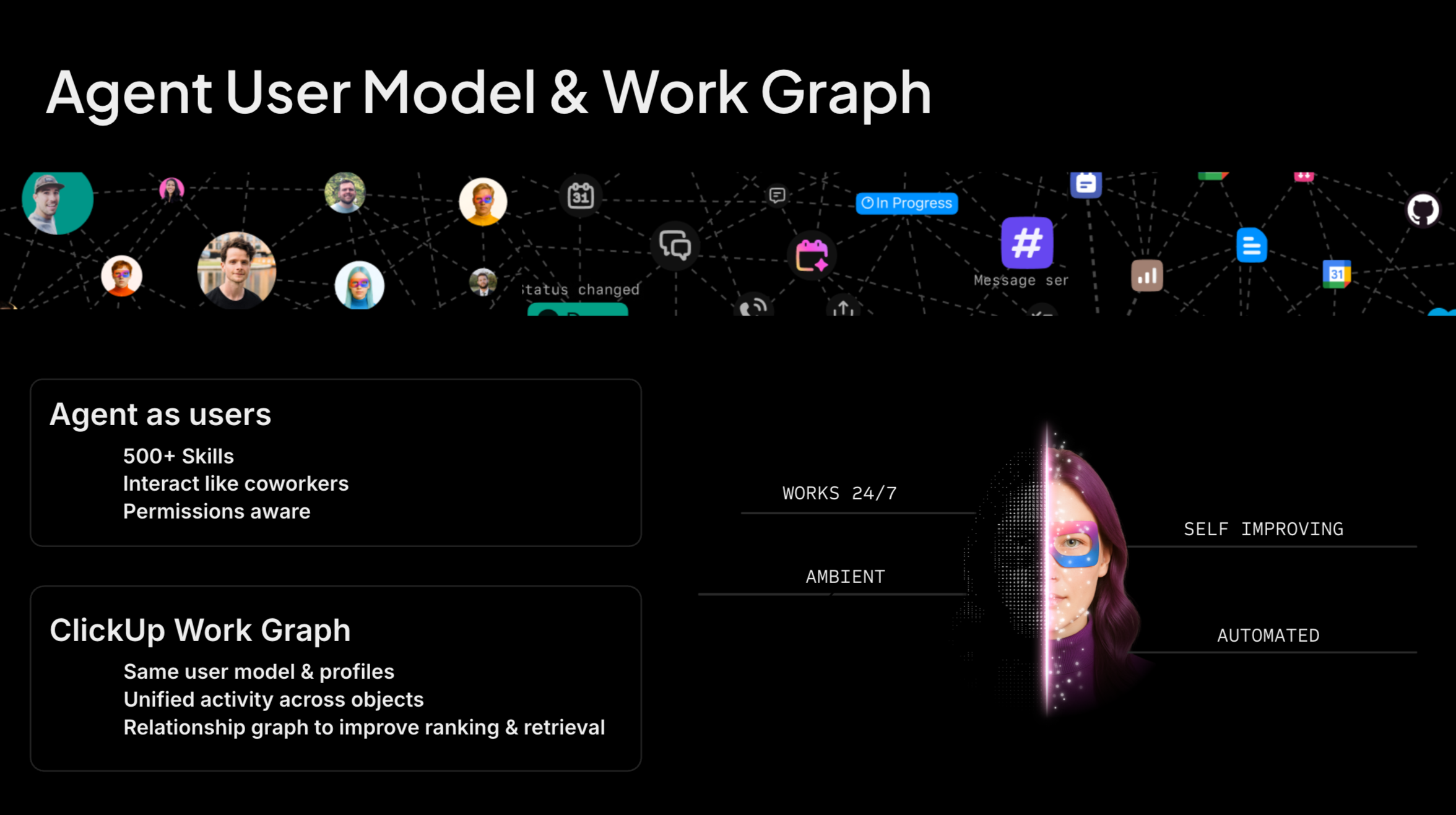

The limiting factor is no longer model intelligence. It's whether your agents can see the right context, act in the right places, and behave like teammates.